Intel unveils new AI chips 1,000 times faster than their current ones

Credit: Cnet

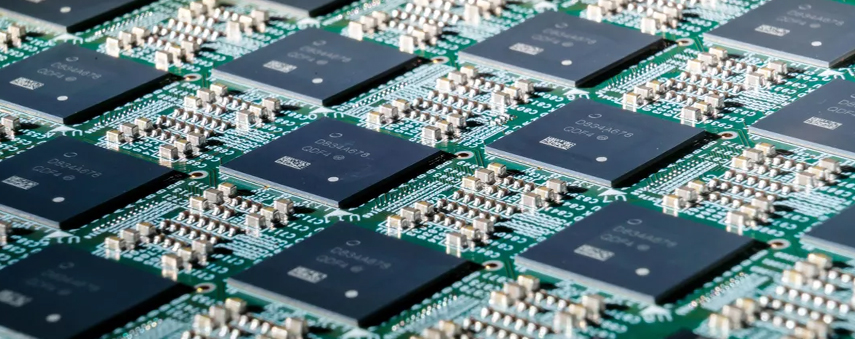

Pohoiki Beach, Intel’s brand new neuromorphic AI chip, contains 64 processors and imitates learning ability and energy efficiency of human brains.

Professor Jeff Lichtman begins his courses by posing a question to his students about how much we collectively know of the human brain. If everything we need to know about the brain is stretched out over a mile, how much of this mile have we already walked? Whilst answers naturally range, Professor Lichtman believes the correct reply to be three inches.

Despite our lack of concrete knowledge about the organ that dictates everything we think and feel, artificial intelligence is hurtling towards the day that it surpasses the human brain. We already have man-made neural networks that act in the same way that our own neurons do. AI chips full of processors can seek to try and replicate the learning ability of a human and the technology is popular for all kinds of AI applications.

Intel’s new Pohoiki Beach system can process data up to 1,000 times faster than general-purpose CPUs and GPUs whilst using less power. Researchers are currently using the tech to improve the performance of prosthetic limbs, enabling them to adapt to uneven ground and even to create more precise maps than autonomous vehicles have ever used.

According to Rich Uhlig, Managing Director of Intel Labs, “Pohoiki Beach [is a] specialised system to solve complex, compute-intensive problems.”

What is a neuromorphic system?

The first semiconductor chips could hold two transistors each. As long ago as the 1970s, when complex semiconductors were evolving, very-large-scale integration (VLSI) began. This was the process of building an integrated circuit (IC) by combining millions of transistors into a chip.

Fast-forward to the 1980s and neuromorphic computing was coined to describe the use of VLSI systems containing electronic circuits to replicate neuro-biological architectures. The Intel 4004 CPU from 1971 contained 2,300 transistors: 45 years later, the Qualcomm Snapdragon 835 contained 3 billion. The Apple A11 Bionic chip used in the iPhone X has 4.3 billion transistors.

This is uncharted territory for computing: neuromorphic engineering relies on a totally different structure to the computing we know.

Just one Loihi chip has 2 billion transistors and the Pohoiki Beach system is comprised of 64 Loihi chips. One chip also has 8.3 million neurons – transistors differ to neurons; they transmit information in a way much more similar to biological processes of the brain than traditional silicon – and 130 million synapses. The Loihi chip uses an asynchronous spiking neural network (SNN) to implement adaptive self-modifying, event-driven computations. This, in turn, implements learning and inference with high efficiency.

The big difference between neuromorphic processors and the processors that are used in your computer is that the former process data in an analogue fashion, rather than digital. Just like brain synapses, they vary the intensity of these signals, rather than sending in binary.

This is a similar concept to quantum computing, in which a bit can be both one and zero.

How important is the new Intel chip system?

Intel has claimed that it will release an even larger design, codenamed Pohoiki Springs, which will give “an unprecedented level of performance and efficiency for scaled-up neuromorphic workloads.”

Despite the neuromorphic timeline stretching all the way back to when flares were in fashion, we still don’t know for certain if neuromorphic chips are suitable for mass production. This is uncharted territory for computing: neuromorphic engineering relies on a totally different structure to the computing we know.

Loihi’s SNN imitates the brain’s behaviour and that brings us a little closer to understanding how the brain works; perhaps a fraction of an inch closer by Professor Lichtman’s reckoning.

The Loihi chip is particularly impressive to many because it demonstrates 109 times lower power consumption to a compared to a GPU. When a network is scaled up 50 times, the chip maintains real-time performance results with only 30% more power. To put that in context, IoT hardware uses 500% more power.

Neuromorphic systems are just the start of a whole new world for computing: one in which experts have suggested that we could eventually grant personhood to machines of such power if these machines become truly equal to our neural capacity.

Pohoiki Beach is another step forward for neuromorphic technology. It’s expected that Intel will be able to simulate 100 million neurons by the end of 2019.